Edge Computing: The Next Big Thing in Emerging Technology

How the Future of Computing Is Moving Away From the Cloud and Closer to You

Introduction

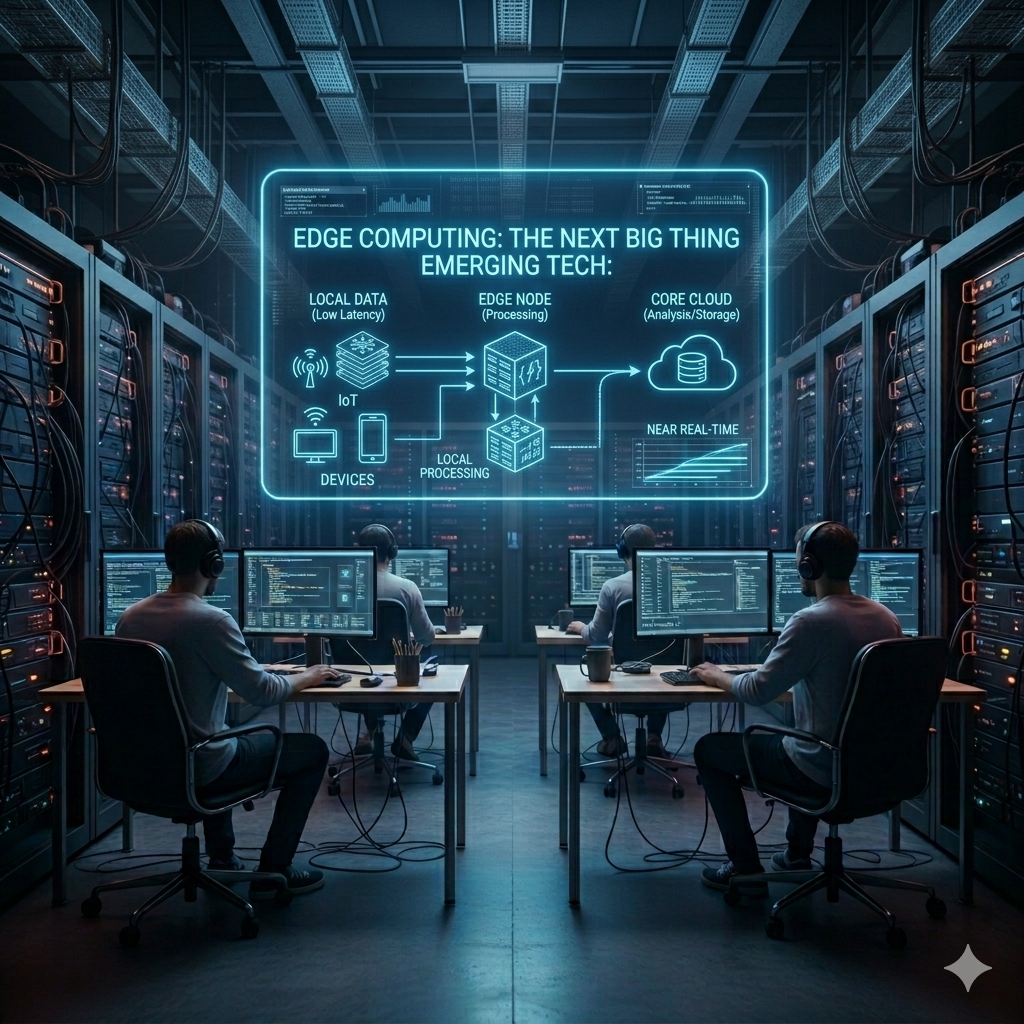

Edge Computing: The Next Big Thing Emerging Tech:

We are living in an era of unprecedented technological transformation. Every decade brings with it a technology so powerful, so fundamentally disruptive, that it reshapes entire industries, rewrites business models, and changes the way human beings interact with the world around them. In the 1990s, it was the internet. In the 2000s, it was the smartphone. In the 2010s, it was cloud computing and artificial intelligence. And now, as we move deeper into the 2020s, a new technology is rapidly emerging as the defining infrastructure shift of our time: edge computing.

Edge computing is not just an incremental improvement over existing technology. It represents a fundamental rethinking of where computation happens, how data flows through networks, and how intelligent systems interact with the physical world. It is the technology that will make truly autonomous vehicles possible, power the factories of the future, enable real-time remote surgery, and bring intelligence to billions of devices that today are little more than dumb sensors.

Yet despite its enormous significance, edge computing remains poorly understood outside of specialized technology circles. Many people have never heard of it. Others have heard the term but cannot explain what it means or why it matters. This article aims to change that. In the next 2,000 words, we will take a thorough, clear-eyed look at edge computing — what it is, why it emerged, how it works, where it is being applied today, what challenges it must overcome, and what the world will look like when it becomes truly mainstream.

By the end of this article, you will not just understand edge computing — you will understand why it may be the most important emerging technology of the next decade.

The Cloud Computing Era: A Brilliant Solution With Real Limits

To understand why edge computing is emerging now, we first need to understand the technology it is evolving beyond: cloud computing. Cloud computing was a genuine revolution. Before the cloud, every company that wanted to run software had to buy, install, and maintain its own physical servers. This was expensive, slow, and inefficient. The emergence of cloud providers like Amazon Web Services, Microsoft Azure, and Google Cloud Platform changed everything. Suddenly, companies could access virtually unlimited computing power on demand, paying only for what they used, without owning a single server.

Cloud computing powered the explosive growth of the internet economy. It enabled startups to compete with established enterprises. It made streaming services, social networks, ride-sharing apps, and e-commerce platforms possible at global scale. For the applications of the 2010s — web apps, mobile apps, data analytics, machine learning model training — cloud computing was and remains an outstanding solution.

But as the 2020s brought new categories of applications and use cases, the limitations of a purely cloud-centric architecture began to show. The core problem is physics: data takes time to travel. Even at the speed of light, sending data from a device in Mumbai to a cloud data center in Singapore and receiving a response back takes time — typically between 20 and 100 milliseconds for well-connected regions, and much longer for remote areas. For a human browsing a website, this latency is imperceptible. But for an autonomous vehicle making split-second decisions, a surgical robot responding to a doctor’s hand movements, or an industrial machine reacting to a safety sensor, even 50 milliseconds of delay is far too long.

This is the gap that edge computing fills — and it is a gap that is growing larger every year as the number of connected devices and the demands placed on them continue to escalate.

What Is Edge Computing? A Clear Definition

Edge computing is a distributed computing architecture in which data processing, storage, and intelligence are moved from centralized cloud data centers to locations physically close to — or at — the point where data is generated and consumed. The “edge” refers to the geographic and network edge: the boundary between the digital network and the physical world, where devices, sensors, and users actually exist.

In practical terms, edge computing means placing computing resources — servers, processors, storage, networking equipment — in locations like cell towers, factory floors, hospital rooms, retail stores, vehicles, and even individual devices themselves, rather than in distant data centers. Data is processed locally, decisions are made locally, and only relevant information is sent to the cloud for longer-term storage and analysis.

The result is a computing architecture that is faster, more resilient, more private, and more capable of handling the real-time demands of a world filled with intelligent, connected devices. Edge computing does not replace the cloud — it extends it, creating a seamless continuum of computing resources that spans from the most powerful data centers down to the smallest embedded chips in IoT devices.

Understanding the Edge Computing Stack

- Device Edge: The devices themselves — sensors, cameras, wearables, vehicles — that generate data and may perform basic processing on-device.

- Near Edge: Local gateways, edge servers, and micro data centers located within the same facility or campus as the devices, handling real-time processing and local decision-making.

- Far Edge / Regional Edge: Larger edge nodes located in cell towers, telecom facilities, or regional data centers — closer than the cloud but serving a broader geographic area.

- Cloud: Central cloud infrastructure for large-scale analytics, machine learning training, long-term storage, and global coordination.

Why Edge Computing Is an Emerging Technology Right Now

Edge computing is not an entirely new concept — the idea of distributed computing has existed for decades. So why is it emerging as a major technology trend specifically now? The answer lies in the convergence of several powerful forces that have simultaneously created both the need for edge computing and the technical capability to deliver it.

The IoT Explosion

The Internet of Things — the network of physical devices embedded with sensors, software, and connectivity — has grown from a few billion devices a decade ago to an estimated 15 billion connected devices today, with projections suggesting 30 billion or more by 2030. Each of these devices generates data continuously. Routing all of that data to centralized cloud servers for processing is becoming technically and economically impractical. Edge computing is the natural solution: process the data where it is generated, send only what matters to the cloud.

The 5G Revolution

The global rollout of 5G wireless networks is one of the most important enablers of edge computing. 5G delivers not just faster speeds — up to 100 times faster than 4G — but dramatically lower latency, higher connection density, and network slicing capabilities that allow different types of traffic to be handled with different quality-of-service guarantees. Combined with Mobile Edge Computing (MEC), which places computing resources directly within 5G base stations, 5G and edge computing together create a platform capable of supporting applications that were previously impossible.

The AI at the Edge Movement

Artificial intelligence has become one of the most powerful tools in technology, but running AI models traditionally required enormous computing resources available only in the cloud. The rapid advancement of specialized AI hardware — chips like NVIDIA’s Jetson series, Google’s Edge TPU, Apple’s Neural Engine, and Qualcomm’s AI acceleration platforms — has made it possible to run sophisticated machine learning models directly on edge devices. This “AI at the edge” capability unlocks entirely new categories of intelligent applications that cannot tolerate cloud round-trip latency.

Data Privacy and Sovereignty Concerns

Growing public awareness of data privacy issues, combined with increasingly strict data protection regulations like GDPR in Europe and various national data sovereignty laws, has created powerful incentives to process sensitive data locally rather than sending it to distant cloud servers. Edge computing enables organizations to keep sensitive data — patient medical records, financial transactions, personal biometric data — within controlled local environments, reducing both regulatory risk and the potential impact of data breaches.

Transformative Applications Across Industries

The true measure of any emerging technology is not its technical elegance but its real-world impact. Edge computing is already driving transformation across a remarkable range of industries.

Autonomous Vehicles and Transportation

The self-driving vehicle is perhaps the most visceral demonstration of why edge computing is essential. A modern autonomous vehicle is equipped with dozens of sensors — cameras, radar, LiDAR, ultrasonic detectors, GPS — that collectively generate between one and two terabytes of data per hour of driving. Every millisecond, this data must be processed to detect obstacles, recognize traffic signals, predict the behavior of pedestrians and other vehicles, and make precise steering, acceleration, and braking decisions.

None of this processing can wait for a cloud round-trip. All critical decision-making happens on powerful onboard computers — edge computers embedded in the vehicle itself — in real time. Meanwhile, aggregated driving data is periodically uploaded to the cloud to improve the AI models that power the vehicle’s perception and decision systems. Edge computing in the vehicle, cloud computing for learning and improvement — a perfect division of labor.

Smart Manufacturing and Industry 4.0

The fourth industrial revolution — Industry 4.0 — is transforming manufacturing through the integration of cyber-physical systems, IoT, and artificial intelligence. Edge computing is at the heart of this transformation. On modern factory floors, edge computing powers real-time quality control systems that use computer vision to detect defects in products as they move down production lines at high speed. It enables predictive maintenance systems that monitor the vibration, temperature, and electrical signatures of machinery to predict failures hours or days before they occur, preventing costly downtime.

Collaborative robots — cobots — that work alongside human workers rely on edge computing to process sensor data and make safety-critical decisions without any cloud dependency. A robot that must stop instantly when a human enters its workspace cannot afford even a 50-millisecond cloud round-trip. Edge computing makes these human-robot interactions safe and seamless.

Healthcare and Precision Medicine

Healthcare is one of the sectors where edge computing’s impact is most profound and most personal. Remote patient monitoring systems equipped with wearable sensors continuously track vital signs — heart rate, blood pressure, blood oxygen, ECG — and process this data at the edge to detect dangerous changes in real time. A wearable cardiac monitor can detect a potentially fatal arrhythmia and alert medical staff within seconds, without sending data to the cloud first.

Robotic surgery systems, which allow surgeons to perform delicate procedures remotely using robotic instruments, require sub-millisecond response times to translate the surgeon’s hand movements into precise robotic actions. Any perceptible delay would make such systems unusable and dangerous. Edge computing — with computing resources located within the same hospital or surgical facility — makes remote robotic surgery not just possible but safe.

Retail and the Intelligent Store

The retail industry is being transformed by edge computing-powered intelligent store technologies. Computer vision systems analyze shopper behavior, track inventory levels in real time, and enable checkout-free shopping experiences where customers simply pick up items and walk out, with payment processed automatically. These systems process video feeds from hundreds of cameras in real time — a task requiring enormous computational power that must happen locally, not in the cloud, to deliver the seamless real-time experience shoppers expect.

Energy and Smart Grid Management

Modern electrical grids are becoming increasingly complex, integrating energy from diverse and intermittent renewable sources like solar and wind alongside traditional generation. Managing this complexity in real time — balancing supply and demand, responding to sudden changes in generation or consumption, preventing blackouts — requires edge computing systems distributed throughout the grid that can make autonomous decisions in milliseconds without waiting for central cloud coordination.

The Technical Challenges Edge Computing Must Overcome

For all its promise, edge computing faces real and significant technical challenges that must be addressed for the technology to reach its full potential.

Security at Scale

Every edge node is a potential security vulnerability. Unlike centralized cloud data centers, which can be protected with layers of physical and cyber security, edge nodes are often deployed in accessible public locations — cell towers, retail stores, street furniture, vehicles — where they may be physically tampered with. Securing thousands or millions of distributed edge nodes requires new approaches to device authentication, software integrity verification, encrypted communications, and automated security patch deployment. This is one of the most active areas of research and development in the edge computing field.

Distributed Management Complexity

Managing a fleet of distributed edge nodes — deploying software updates, monitoring health, troubleshooting failures, optimizing performance — is orders of magnitude more complex than managing a centralized cloud infrastructure. Organizations deploying edge computing at scale need sophisticated orchestration platforms, automated management tools, and new operational practices to handle this complexity without requiring armies of field technicians.

Intermittent Connectivity

Edge computing environments often experience unreliable network connectivity. A remote agricultural sensor, a vehicle in a tunnel, or an offshore industrial platform may have intermittent or limited connectivity to both the cloud and other edge nodes. Edge systems must be designed to operate autonomously during connectivity outages, synchronize state when connectivity is restored, and handle conflicting data that may have accumulated during disconnected periods.

Standardization and Interoperability

The edge computing ecosystem is currently fragmented across dozens of competing platforms, frameworks, and protocols from different hardware manufacturers, software vendors, and cloud providers. This fragmentation makes it difficult to build interoperable systems and increases the complexity and cost of edge deployments. Industry-wide standardization efforts are underway through organizations like the Linux Foundation’s LF Edge project and the Industrial Internet Consortium, but achieving true interoperability across a diverse and competitive landscape takes considerable time.

The Road Ahead: Edge Computing’s Future

Looking forward, the trajectory of edge computing is clearly upward and accelerating. Market research firms project the global edge computing market will grow from approximately 60 billion dollars in 2024 to over 230 billion dollars by 2030 — a compound annual growth rate exceeding 25 percent. This growth will be driven by continued IoT proliferation, 5G expansion, AI advancement, and the digitization of industries that have been relatively untouched by previous waves of technology.

Several specific developments will define the next phase of edge computing’s evolution. Tiny machine learning — running AI models on microcontrollers with as little as 256 kilobytes of memory — will bring intelligence to the most constrained edge devices, from medical implants to agricultural soil sensors. Quantum edge computing represents a longer-term horizon where quantum processors at the edge could solve optimization problems beyond the reach of classical computers. Neuromorphic chips — processors that mimic the architecture of the human brain — promise to dramatically reduce the energy consumption of edge AI, enabling always-on intelligent devices that run on harvested ambient energy.

The relationship between edge computing and cloud computing will continue to evolve from competition to deep integration. The leading cloud providers are all investing heavily in edge extensions of their platforms — AWS Outposts, Azure Arc, and Google Distributed Cloud — that bring cloud management, security, and services to edge locations. The future is not edge versus cloud but a seamlessly managed continuum across both.

Conclusion

Edge computing is not just another technology trend. It is a fundamental infrastructure shift that will determine the capabilities of the digital world for decades to come. By bringing intelligence, processing, and decision-making to the physical edge of the network — to the places where data is born and where it must be acted upon — edge computing overcomes the inherent limitations of cloud-centric architectures and unlocks a generation of applications that will touch every aspect of human life.

From the autonomous vehicles navigating our roads to the robots building our products, from the wearables monitoring our health to the smart grids powering our cities, edge computing is the invisible infrastructure that will make the intelligent, connected, responsive world of tomorrow possible today.

The challenges are real, the investment required is substantial, and the path to full maturity will take years. But the direction is clear, the momentum is powerful, and the potential is extraordinary. Edge computing is not coming — it is already here, already transforming the industries that will define the next era of human progress.

The future is not in the cloud. The future is at the edge.

Edge Computing: The Next Big Thing Emerging Tech: