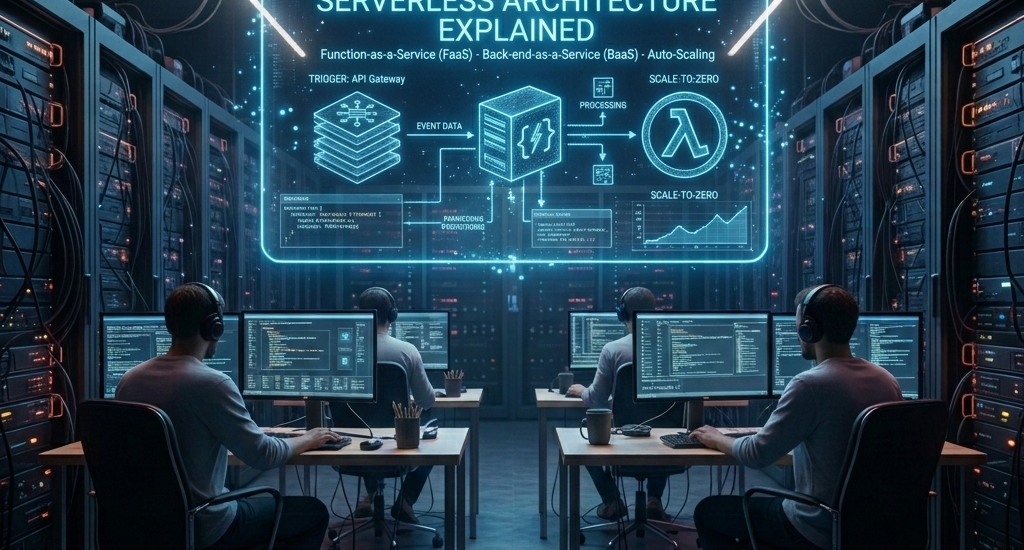

Serverless Architecture Explained: The Complete Beginner’s Guide

How Modern Applications Run Without Managing a Single Server

Introduction

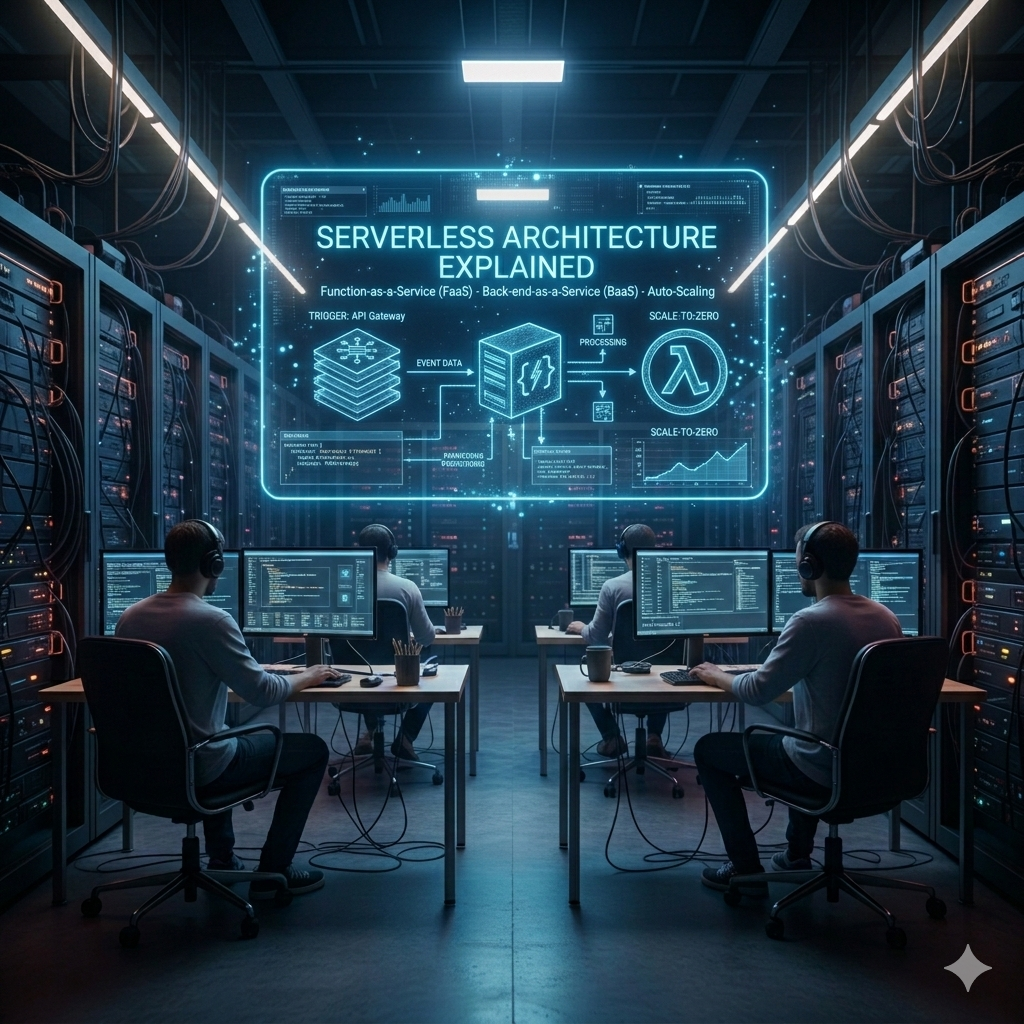

Serverless Architecture Explained

Picture this: you have a brilliant idea for a web application. You build it, you test it, and now you want to launch it to the world. In the traditional model, before a single user can visit your app, you need to set up servers, configure operating systems, install software, manage security patches, monitor uptime, plan for traffic spikes, and worry about what happens when your server crashes at 3 AM. All of this infrastructure work has nothing to do with your actual idea — but it consumes enormous amounts of time, money, and expertise.

Now imagine a different world: you write your application code, deploy it with a single command, and a giant technology company handles everything else — the servers, the scaling, the security patches, the uptime, the load balancing — all of it. You pay only for the exact milliseconds your code actually runs. When nobody is using your app, you pay nothing. When a million people suddenly use it simultaneously, it scales automatically without you lifting a finger.

This is not a fantasy. This is serverless architecture — and it is one of the most important paradigm shifts in the history of software development.

In this comprehensive guide, we will explore exactly what serverless architecture is, how it works under the hood, why so many companies are adopting it, where it excels and where it struggles, and how you can get started building serverless applications today. Whether you are a developer, a startup founder, or simply someone curious about modern technology, this article will give you a thorough and clear understanding of serverless computing.

What Is Serverless Architecture?

Despite its name, serverless architecture does not mean there are no servers. Servers absolutely exist — they are just someone else’s problem. Serverless means that developers no longer need to think about, provision, manage, or scale the underlying server infrastructure. The cloud provider handles all of that automatically and invisibly.

In a serverless model, developers write individual functions — small, focused pieces of code designed to perform one specific task — and deploy them to a cloud platform. The platform takes care of running these functions in response to events, scaling them automatically based on demand, and charging only for the actual compute time consumed. When no requests are coming in, the functions are not running and no cost is incurred.

The most common form of serverless computing is Function as a Service (FaaS). Platforms like AWS Lambda, Google Cloud Functions, and Microsoft Azure Functions are the leading FaaS offerings. But serverless is a broader concept that also includes managed databases, authentication services, file storage, and other backend services where the infrastructure management is completely abstracted away from the developer.

The serverless model sits at the top of a progression in how computing has evolved. First came physical servers that companies owned and managed themselves. Then came virtual machines that made servers more flexible. Then came cloud computing, where companies rented virtual machines from providers like AWS and Azure. Then came containers, which made applications more portable and efficient. Serverless is the next step: you do not manage infrastructure at all — you just write code and run it.

How Serverless Architecture Works

To understand how serverless works, it helps to trace the lifecycle of a single serverless function from creation to execution.

A developer writes a function — a piece of code that accepts input, performs some logic, and returns output. This function might be written in JavaScript, Python, Java, Go, C#, or several other supported languages depending on the platform. The function is designed to do one thing well: process a payment, resize an image, send an email, query a database, validate user input, or any other discrete task.

The developer deploys this function to a serverless platform like AWS Lambda. The function sits dormant — no servers are running, no costs are accumulating. Then an event occurs: an HTTP request comes in from a user, a file is uploaded to cloud storage, a message arrives in a queue, a database record changes, or a scheduled timer fires. The serverless platform detects this event and instantly spins up an execution environment for the function.

The function executes, performs its task, returns its result, and the execution environment shuts down. The entire process might take milliseconds. The developer is charged for exactly the compute time consumed — often measured in increments of one millisecond — plus the number of invocations. The platform handles everything else: provisioning the execution environment, routing the event to the right function, scaling to handle thousands of simultaneous invocations, and cleaning up afterward.

The Event-Driven Model

Serverless architecture is fundamentally event-driven. Functions are triggered by events rather than running continuously. This is a significant mental shift from traditional server-based thinking, where a server runs 24/7 waiting for requests. In serverless, nothing runs until something happens. This event-driven model is both the source of serverless computing’s efficiency and one of the key adjustments developers must make when adopting it.

Common event sources that can trigger serverless functions include HTTP requests via API gateways, file uploads to object storage (like Amazon S3), database change streams, message queue events, authentication events, IoT sensor data, scheduled cron jobs, and webhooks from third-party services. The rich variety of event sources makes serverless extremely versatile for building reactive, real-time systems.

Key Benefits of Serverless Architecture

1. Zero Infrastructure Management

The most immediate and obvious benefit of serverless is that developers are completely freed from infrastructure concerns. No servers to provision, no operating systems to patch, no capacity planning required, no load balancers to configure, no monitoring agents to install. The cloud provider handles all of this automatically. Development teams can focus entirely on writing application logic and delivering business value rather than managing infrastructure. For small teams and startups especially, this is a game-changing advantage.

2. Automatic Scaling

Serverless platforms scale automatically and instantaneously in response to demand. Whether your function is invoked once per day or one million times per second, the platform handles the scaling without any configuration or intervention from you. A startup that suddenly goes viral and experiences a thousand-fold increase in traffic overnight will not see their serverless application go down — it will simply scale up automatically to meet the demand. This kind of elastic scaling is extraordinarily difficult to achieve with traditional server infrastructure and requires significant planning and investment.

3. Pay Per Use — True Cost Efficiency

Traditional servers cost money whether they are being used or not. A server sitting idle overnight, on weekends, or during off-peak hours still consumes resources and incurs charges. Serverless computing introduced a fundamentally different pricing model: you pay only for the actual compute time your functions consume. If your application has zero traffic for eight hours overnight, you pay absolutely nothing for those eight hours. For workloads that are intermittent, unpredictable, or have significant idle periods, serverless can reduce infrastructure costs by 70 to 90 percent compared to always-on server deployments.

4. Faster Development and Deployment

Without the overhead of infrastructure setup and management, development cycles become dramatically faster. Developers can go from idea to deployed code in minutes rather than hours or days. Serverless architectures also naturally encourage breaking applications into small, independent functions, which are easier to develop, test, debug, and deploy than large monolithic applications. Many serverless platforms support deploying updates in seconds, enabling extremely rapid iteration.

5. Built-In High Availability

Major serverless platforms run across multiple availability zones and data centers by default. This means your serverless application automatically has high availability and fault tolerance built in without any additional configuration or cost. If one data center experiences a problem, the platform seamlessly routes requests to another. Achieving this level of resilience with traditional infrastructure requires significant architectural planning and ongoing operational effort.

Popular Serverless Platforms

AWS Lambda

Amazon Web Services Lambda, launched in 2014, was the service that popularized serverless computing and remains the market leader. Lambda supports a wide range of programming languages including Node.js, Python, Java, Go, Ruby, and C#. It integrates deeply with the broader AWS ecosystem — S3, DynamoDB, API Gateway, SQS, SNS, and dozens of other services can all trigger Lambda functions. AWS Lambda’s generous free tier (one million free invocations per month) makes it accessible for learning and small projects.

Google Cloud Functions

Google Cloud Functions is Google’s serverless compute offering, tightly integrated with Google Cloud Platform services like Firebase, BigQuery, Cloud Storage, and Pub/Sub. It supports Node.js, Python, Go, Java, Ruby, PHP, and .NET. Google Cloud Functions is particularly popular in the Firebase ecosystem for building mobile and web application backends, where it provides a seamless serverless backend for Firebase-powered apps.

Microsoft Azure Functions

Azure Functions is Microsoft’s serverless platform, offering deep integration with the Microsoft ecosystem including Azure Storage, Azure Service Bus, Cosmos DB, and Microsoft 365 services. It supports a particularly wide range of languages and offers a unique “durable functions” feature that allows stateful workflows to be orchestrated in a serverless environment — solving one of the classic challenges of stateless serverless functions.

Cloudflare Workers

Cloudflare Workers is a newer but rapidly growing serverless platform that runs functions at the network edge — on Cloudflare’s global network of data centers distributed around the world. This means functions execute extremely close to end users, achieving ultra-low latency that centralized cloud functions cannot match. Workers is an excellent choice for performance-critical use cases like API routing, authentication, and content personalization.

Real-World Use Cases for Serverless

Serverless architecture is not a one-size-fits-all solution, but it excels in a surprisingly wide range of scenarios that are common across many industries.

REST APIs and Web Backends

One of the most common serverless use cases is building REST APIs. Each API endpoint becomes a separate serverless function — GET /users triggers one function, POST /orders triggers another. An API Gateway service (like AWS API Gateway) handles routing incoming HTTP requests to the appropriate functions. This approach scales effortlessly, costs next to nothing during quiet periods, and allows different parts of the API to be developed and deployed independently.

Image and Video Processing

Media processing is a perfect fit for serverless. When a user uploads a profile photo, a serverless function is triggered automatically to resize it into multiple dimensions, compress it, and store the results. When a video is uploaded to a streaming platform, serverless functions handle transcoding, thumbnail generation, and metadata extraction. These tasks are intermittent, computationally intensive for short bursts, and exactly the kind of event-driven workload where serverless shines.

Real-Time Data Processing

Serverless functions are widely used for processing streaming data in real time. IoT sensors sending telemetry data, financial transactions flowing through a payment system, user activity events from a web application, and social media feeds are all examples of data streams that can be processed with serverless functions. Each event triggers a function that transforms, filters, or stores the data — scaling automatically to handle millions of events per second if needed.

Scheduled Tasks and Automation

Cron jobs and scheduled tasks — sending daily email reports, running nightly database cleanups, generating weekly analytics summaries, checking the health of third-party services — are an excellent fit for serverless. A function can be triggered on a schedule with zero infrastructure overhead, executing for a few seconds or minutes and then disappearing, at a tiny fraction of the cost of running a dedicated server for the same purpose.

Chatbots and Voice Assistants

Conversational interfaces like chatbots and voice assistants have unpredictable, bursty traffic patterns — quiet for hours, then suddenly receiving hundreds of messages simultaneously. Serverless handles this naturally, scaling instantly to meet demand spikes without pre-provisioning capacity. Many of the most popular chatbots and voice assistant skills built for platforms like Amazon Alexa and Google Assistant are powered by serverless functions.

Challenges and Limitations of Serverless

Serverless architecture is powerful, but it is not without its trade-offs and limitations. Understanding these challenges is essential for making informed decisions about when and how to use serverless.

Cold Starts

One of the most discussed limitations of serverless is the “cold start” problem. When a serverless function has not been invoked for a period of time, the execution environment is deallocated. The next invocation must wait for a new environment to be provisioned and the function to initialize — a process that can take anywhere from a few hundred milliseconds to several seconds depending on the runtime, the amount of code, and the cloud provider. For latency-sensitive applications, cold starts can cause noticeable delays for occasional users. Cloud providers have made significant progress in reducing cold start times, and techniques like keeping functions “warm” with scheduled pings can mitigate the issue, but it remains a real consideration.

Vendor Lock-In

Serverless applications tend to become deeply integrated with the specific services and event sources of a particular cloud provider. A Lambda function that integrates with DynamoDB, S3, SQS, and API Gateway is very much an AWS application — migrating it to Google Cloud or Azure would require significant rewriting. This vendor lock-in is a real risk that organizations must weigh carefully, particularly for long-lived applications where cloud provider relationships may change over time.

Limited Execution Duration

Serverless functions are designed for short-lived executions. AWS Lambda, for example, has a maximum execution time of 15 minutes. Google Cloud Functions allows up to 60 minutes. This means serverless is not suitable for long-running processes — video encoding jobs that take hours, machine learning training runs, or complex data migrations that must execute continuously for extended periods. These workloads still need traditional servers or container-based solutions.

Debugging and Observability Complexity

Debugging a distributed system of serverless functions is significantly more challenging than debugging a traditional monolithic application. When a request flows through multiple functions — each potentially logging to different places, executing in isolated environments, and failing silently — tracing the root cause of a bug can be genuinely difficult. Good observability tooling — distributed tracing, centralized logging, and function-level monitoring — is essential for serverless applications in production, and setting this up requires investment and expertise.

Statelessness

Serverless functions are stateless by design. Each function invocation is independent — no information from a previous invocation is available to the next one. Applications that need to maintain state between invocations must use external storage solutions like databases, caches, or object storage to persist and retrieve state. This is a straightforward pattern once understood, but it requires a different way of thinking about application architecture compared to traditional stateful server applications.

Serverless vs. Traditional Architecture vs. Containers

It is worth clarifying where serverless fits relative to other modern architecture approaches, because these technologies are often discussed together and the differences matter enormously in practice.

Traditional servers give you maximum control and are ideal for applications with consistently high traffic, long-running processes, or workloads that require full control over the execution environment. The trade-off is significant operational overhead — someone must manage, patch, monitor, and scale the servers.

Containers with Kubernetes offer a middle ground — more operational efficiency than traditional servers, excellent portability, and the ability to run any kind of workload including long-running processes. Containers require more infrastructure management than serverless but offer more control, better predictable performance, and no cold start issues.

Serverless offers the least operational overhead and the best cost efficiency for intermittent workloads, but comes with the limitations of cold starts, execution time limits, and vendor lock-in. It excels for event-driven, stateless, short-lived functions and is most powerful when combined with other managed cloud services.

In practice, modern applications often use all three approaches together. A typical cloud-native application might use containers for the main application server, serverless functions for background processing and event handling, and traditional managed databases for data persistence — each technology applied where it fits best.

Getting Started with Serverless

The best way to understand serverless is to build something with it. The barrier to entry is remarkably low — you can deploy your first serverless function in under 30 minutes with no prior experience.

Start with AWS Lambda and the AWS Free Tier, which gives you one million free Lambda invocations per month — more than enough for learning and experimentation. The AWS console provides a browser-based code editor where you can write, deploy, and test Lambda functions without installing anything locally.

For a more complete development experience, the Serverless Framework is an open-source tool that simplifies deploying serverless applications to any cloud provider. It lets you define your functions, event sources, and cloud resources in a single YAML configuration file and deploy everything with a single command. The Serverless Framework supports AWS, Google Cloud, Azure, and several other providers, reducing vendor lock-in at the tooling level.

Another excellent starting point is Firebase with Google Cloud Functions, particularly if you are building a mobile or web application. Firebase provides a complete serverless backend — authentication, real-time database, file storage, and hosting — all managed services with no infrastructure to configure. Cloud Functions extend this with custom backend logic triggered by Firebase events.

The Future of Serverless

Serverless computing is not a passing trend — it is a fundamental direction in how computing infrastructure is evolving, and its trajectory points strongly upward. Cloud providers are investing heavily in reducing cold start times, increasing execution limits, improving observability tools, and expanding the range of workloads that serverless can handle effectively.

Edge serverless — running functions at network edge locations distributed around the globe — is one of the most exciting emerging developments. Platforms like Cloudflare Workers and AWS Lambda@Edge bring serverless execution within milliseconds of end users anywhere in the world, enabling a new generation of performance-critical serverless applications that were previously impractical.

The rise of WebAssembly (Wasm) as a serverless runtime is another significant trend. WebAssembly enables near-native performance, sub-millisecond cold starts, and language flexibility that traditional serverless runtimes cannot match — potentially solving the cold start problem that has been one of serverless computing’s most persistent challenges.

Conclusion

Serverless architecture represents one of the most significant shifts in how software is built and deployed in the modern era. By abstracting away all infrastructure concerns, enabling true pay-per-use pricing, and providing automatic scaling from zero to millions of requests, serverless has democratized the ability to build and run scalable applications — making capabilities that once required large DevOps teams accessible to individual developers and small startups.

The limitations are real: cold starts, vendor lock-in, execution time limits, and the stateless programming model all require careful consideration. Serverless is not the right tool for every job, and the most sophisticated cloud architectures combine serverless with containers and traditional infrastructure in a thoughtfully designed hybrid approach.

But for the right workloads — event-driven functions, API backends, media processing, real-time data pipelines, scheduled automation — serverless is often the fastest, cheapest, and simplest solution available. The developer who understands when and how to apply serverless architecture has a powerful tool in their toolkit for building modern, scalable, cost-efficient applications.

The servers are still there. You just never have to think about them again.

Serverless Architecture Explained